The future of interaction is multimodal. But combining touch with air gestures (and potentially voice input) isn’t a typical UI design task.

At Exipple, our design and engineering teams work together to create user interfaces for various contexts that respond to hand gestures and adapt to users’ movements and physical properties. Drawing from an iterative design, development, and evaluation process, I’d like to share our insights on what works in gestural interaction.

Design for gesture discovery

Gestures are often perceived as a natural way of interacting with screens and objects, whether we’re talking about pinching a mobile screen to zoom in on a map, or waving your hand in front of your TV to switch to the next movie. But how natural are those gestures, really?

For users who’ve never experienced a particular interaction paradigm before, gestures are unfamiliar territory. While we all intuitively know what to do in order to see more detail on a map displayed on a touchscreen, consider looking at it from some distance on a large display. What if somebody just told you that you can move your hands in some natural and intuitive way to zoom into that map without touching the screen? What would be the first gesture you’d try? When confronted with such a question, each one of us could define our own natural gestures.

“The future of interaction is multimodal.”

Design for discovery is crucial. Make sure you provide the right cues—design signifiersthat help users discover easily how they can interact with gestures. These can be visual tips about which gesture triggers a particular action. After repeated use, these discovery tips will no longer be necessary because users will have learned the gestures.

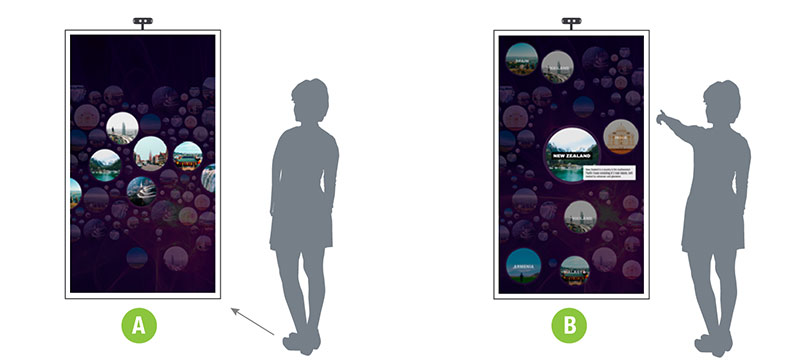

It’s possible to design animations that develop progressively to reveal an opportunity to interact in a different way. For example, in order to make users aware that they can interact from a distance, without actually touching the screen, we created a menu that reveals more information as the hand points towards the screen. Initially there’s a playful arrangement of floating images (A). Getting closer and raising the hand towards those reveals that each image is actually a category that contains content (B).

Why direct translation from touch doesn’t work

Last year we did a small, informal study. We invited people to our studio and showed them some familiar interfaces on a TV display: menus with icons, a map, a grid, and a carousel view. We asked them to imagine how they’d interact with these interfaces from a distance using air gestures.

The interfaces were actually a set of mini gestural prototypes. After we collected their expectations, they explored those and gave us feedback. What emerged as a clear pattern was that their expectations were largely driven by their familiarity with touch gestures on mobile devices. All of our participants applied the mental models they have from using their mobile phones to air gestures. Sometimes one could even guess the difference between iOS and Android users from their expectations on how the interface would work.

“What’s most intuitive is not necessarily the most efficient and easy to use.”

But we quickly encountered a challenge: What’s most intuitive is not necessarily the most efficient and easy to use. For example, a mouse is a high-precision device that affords a good level of control. A human hand moving in the air across 3 dimensions is not as precise. We might think we’re moving our hand across an X-axis, while we’re actually also moving it slightly across the other dimensions.

We can’t expect to achieve the same level of precision. Focusing on making very careful movements will inevitably create some tension—and “stiff hands” are by no means a natural interaction.

When touching a screen surface, the point of touch becomes a start—a point of reference. Now consider the difference between the typical 2-finger pinch gesture to zoom in and out and using your fingers or both hands in the air to form a similar kind of pinch or spread gesture. The point of reference for the zoom level becomes unclear. And you can’t just let go off the touchscreen to stop the interaction, so the start and end point also become ambiguous.

An example to avoid: the equivalent of a pinch gesture in an air gesture.

Try not to translate touch gestures directly to air gestures even though they might feel familiar and easy. Gestural interaction requires a fresh approach—one that might start as unfamiliar, but in the long run will enable users to feel more in control and will take UX designfurther.

Kill that “jumpy” cursor

If you’re using computer vision technology for your project (like capturing gestures with depth sensor cameras such as Kinect, Asus, Orbecc, etc.), you know that hands or fingers aren’t always tracked 100% on their exact position as they move.

Other technologies might afford better precision, but they usually require users to wear special devices. The computer can’t always “see” our hand position as it moves, and the result is what we call the trembling hand: the view of a somewhat “nervous” and unstable cursor or pointer on the screen.

“Eliminate the need for a cursor as feedback, but provide an alternative.”

Designing a different pointer or cursor won’t help much, as it still needs to follow a hand moving on the screen. You could ask developers to filter out the very subtle hand movements to avoid this effect. This solution, however, comes at the high price of losing some responsiveness and precision, with a slightly slower cursor lagging behind the hand, which gives users less of a sense of control over the interface. And losing on user control is something we can’t afford.

So, what should we do?

Kill that cursor. Don’t have cursors on touchscreens, either. Eliminate the need for a cursor as feedback, but provide an alternative. Make images and objects “pop out” and respond to the user’s hand movement instantly, without any pointers.

This fundamentally changes the way you think about the user interface. It’s not web, and it’s not a mobile touch experience, either.

Think freely

Try to liberate your creative thinking from the standard web and mobile UI patterns you’re familiar with. Forget about buttons—think actions. Imagine that you no longer have screens, but instead you want to use gestures to control devices in the environment around you. How would you tell the TV to lower the volume? How would you turn the light on?

Symbolic and iconic gestures, such as making the “Shhh” sign with your index finger to tell your TV to lower the volume, are instant and expressive. They might be context-specific and require some user onboarding, but once users learn them, they’re easy to remember and perform.

Some successful gestures we’ve developed to control media playback:

Aim to create associations between the gesture and the action that it triggers. These can be based on meaning or a visual reference that’s easy to memorize. It’s not easy—you need to consider aspects like cultural context. For example, gestural expressions that are perfectly acceptable in one country or culture might mean something rude in another. Symbols that are very prominent in some contexts might not be helpful in others.

Relying on symbolic gestures to create all of sorts interactions would probably result in far too many gestures to remember. Consider those as your quick, powerful shortcuts—something worth assigning to actions that your user does repeatedly and frequently.

Minimize false positives

One of the biggest challenges for a computer is to distinguish between real intent and all those accidental hand gestures that people do naturally, like moving your hands around while talking to someone.

It’s easy to trigger actions accidentally and experience an erratic user interface that changes when it shouldn’t. As a UI designer, you need to work closely with developers to find out what works well and what doesn’t so that you can avoid those false positives.

A good start: Always design gestures tied to a particular context or conditions that need to be met. Is music currently playing? Then the gesture triggers something. If not, it does nothing.

“Forget about buttons—think actions.”

Time is an important factor to differentiate a gesture from accidental hand movements. For instance, if I point at an object for more than one second, it means I actually want to interact with it.

Distance is another one. If you’re designing an interactive installation for a museum or visitor center, you’d probably want it to recognize the gestures of people who are close enough to engage, as opposed to those of bystanders in the distance.

Avoid fatigue

As obvious as it may sound, it’s not always so easy to perceive the impact of gesturing. You need to observe your users again and again to get a real feeling about the experience you’re creating.

Simple points to remember:

Example of smaller movements mapped to a larger part of the screen for comfortable reach.

Unless you’re designing a physical game or exercise, make sure people don’t need to raise and hold their hands up too often or for too long

- Ensure that the mapping between hand trajectory and distance covered on the UI is comfortable, especially for large screens. This means that users can point to any part of the screen without any strain.

- The use of 2 hands generates less fatigue than a single-handed interaction. You could use one hand as the dominant hand to initiate an interaction (showing a slider, for instance). Then use the second hand as support (adjusting the value of that slider). Consider that you don’t need to do everything with a single hand, and explore alternative combinations.

Be consistent and enable the same action with any hand

Finally, any gesture a user can trigger with their right hand should also be possible with their left hand. More than accommodating both the right-handed and left-handed among us, this consistency supports learning and adoption. So if you’ve learned a gesture, you can start with either hand—no need to memorize which one it was.

Consistency needs to run all across your concept, just like with any UX project. After creating successful combinations of gesture + action, consider whether you need to enable any similar actions in other use cases. Users would expect to use the same gesture for those once they become familiar.

Aim to create a consistent gestural language that’s easy to discover and remember.

With these design guidelines for gestural interaction in hand, you can start exploring this relatively uncharted creative space. Once you understand the differences, work towards combining air gestures with touch to create unique and fluid user interactions.